If you work in QA, this scenario probably sounds familiar to you. You’re starting your day after a weekend on Monday, you’re just getting in the flow, and CI is already red. Half your test E2E suite is failing. You panic and rush to check the logs, expecting a logic failure like auth. Instead, you’re staring at a broken CSS class. Nothing is actually broken for users. The UI just changed.

End-to-end (E2E) testing was supposed to protect us from this kind of scenario precisely. The goal was simple: make sure users can successfully check out a product on an ecommerce platform, update their preferences, or sign up for an account. Somewhere along the way, our tests stopped reflecting that goal.

Instead of validating user behavior, we end up validating that the UI hasn’t changed since the last time you viewed it. We’re testing implementation details instead of user outcomes.

But what would happen if we focused on what a user is trying to accomplish, not the exact element they happen to click? Let’s find out!

The Hidden Cost of Selectors

CSS selectors are easy to write upfront. They are also fundamental for UI development and essential for automated testing. Still, they are pretty expensive; not upfront, but later, in maintenance and flaky tests. You know how it goes, your team should be shipping features, but they’re busy debugging and fixing flaky tests instead.

With every test that relies on deep selectors or XPath, you’re making a bet on stability. But software is fluid, DOM structures evolve, refactors happen, and then design systems get updated. All this leads to test failures, not because their functionality is broken, but because there has been a shift in the path to functionality.

That’s the difference between testing implementation and testing the intent of that implementation. With the former, you’re asking, “Is the button still in the same location and has it got the same CSS class?” With the latter, however, your question is, “Can the user still check out?”

One breaks because of a small UI tweak of a button position, whereas the other doesn’t care where the button is: it only cares whether the user can use that button to add an item to the cart.

However, the real cost here is the implementation time. Every minute spent updating a broken selector is a minute not spent building features. Your QA team stops acting as a quality gatekeeper and slowly turns into a team of test maintainers.

Reframing E2E Tests

To work effectively with end-to-end testing, you need to start thinking like a user. There is a need for a conceptual shift, where you stop thinking like a crawler that looks for classes, IDs, and similar just to test the button or a card component functionality. You need to move from “click this element” to “accomplish this goal.”

Users don’t navigate your app by selectors. They never stop to think, “I need to click this element with data-testid=’add-to-cart-btn’.” Their thought process goes something like, “I want to add this item to the cart.” That’s the intent; that’s what you should test.

Let’s see this intent practically through an example.

Bad approach: Step-based testing

page.goto('/seven-eleven');

page.click('[data-testid="add-cart-btn"]');

expect(cart.count()).toBe(1);Good approach: Goal-driven testing

“Add a product to the shopping cart from a merchant page.”

See the difference? The first approach is purely writing test scripts, which are brittle. Any change in the CSS class or ID leads to a test failure. The second approach describes the goal and what should happen. The how becomes flexible.

Before: Tests validate that specific elements exist in specific places.

After: Tests validate that users can accomplish their goals.

How AI Tools Learn What Users Are Trying to Do

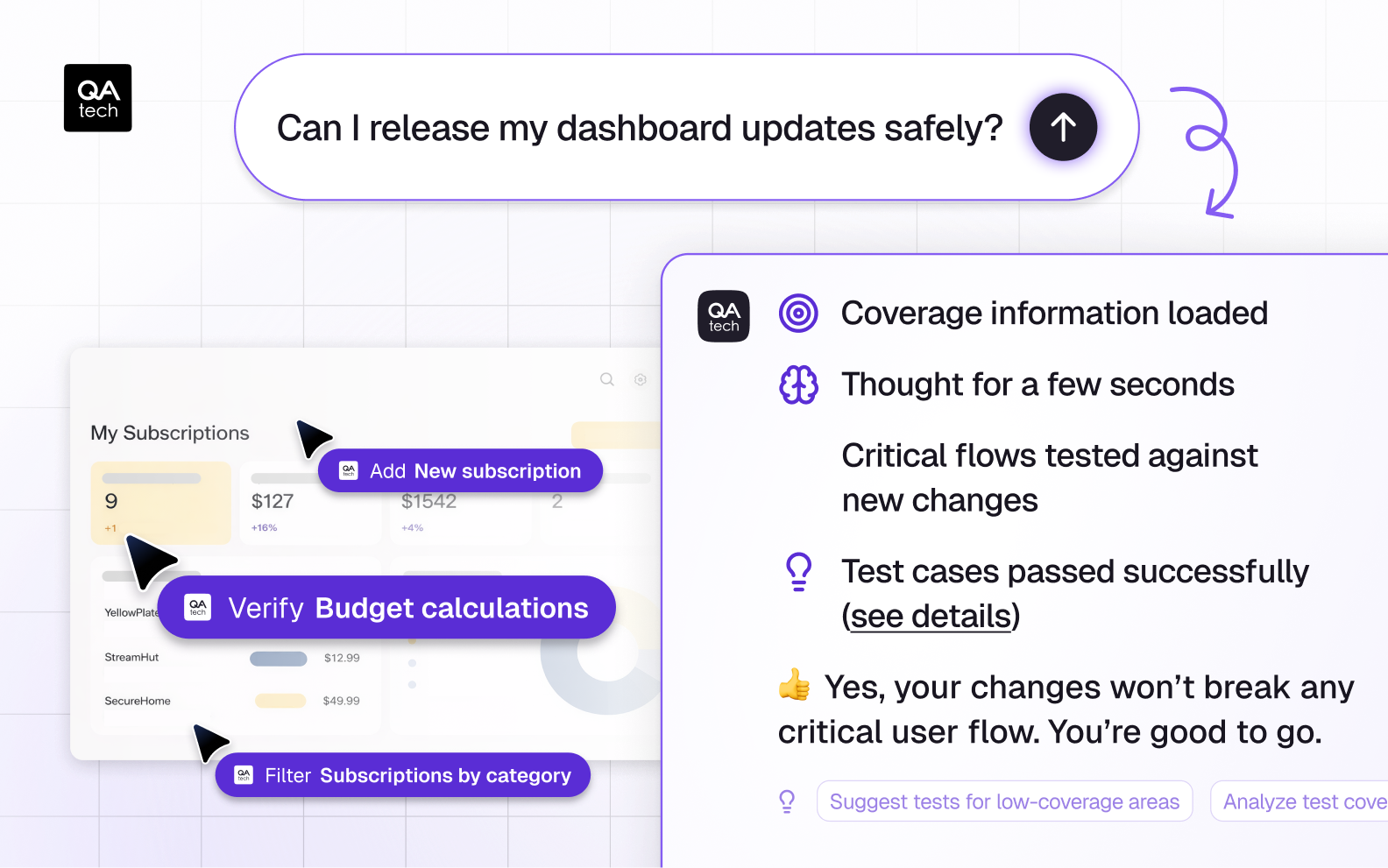

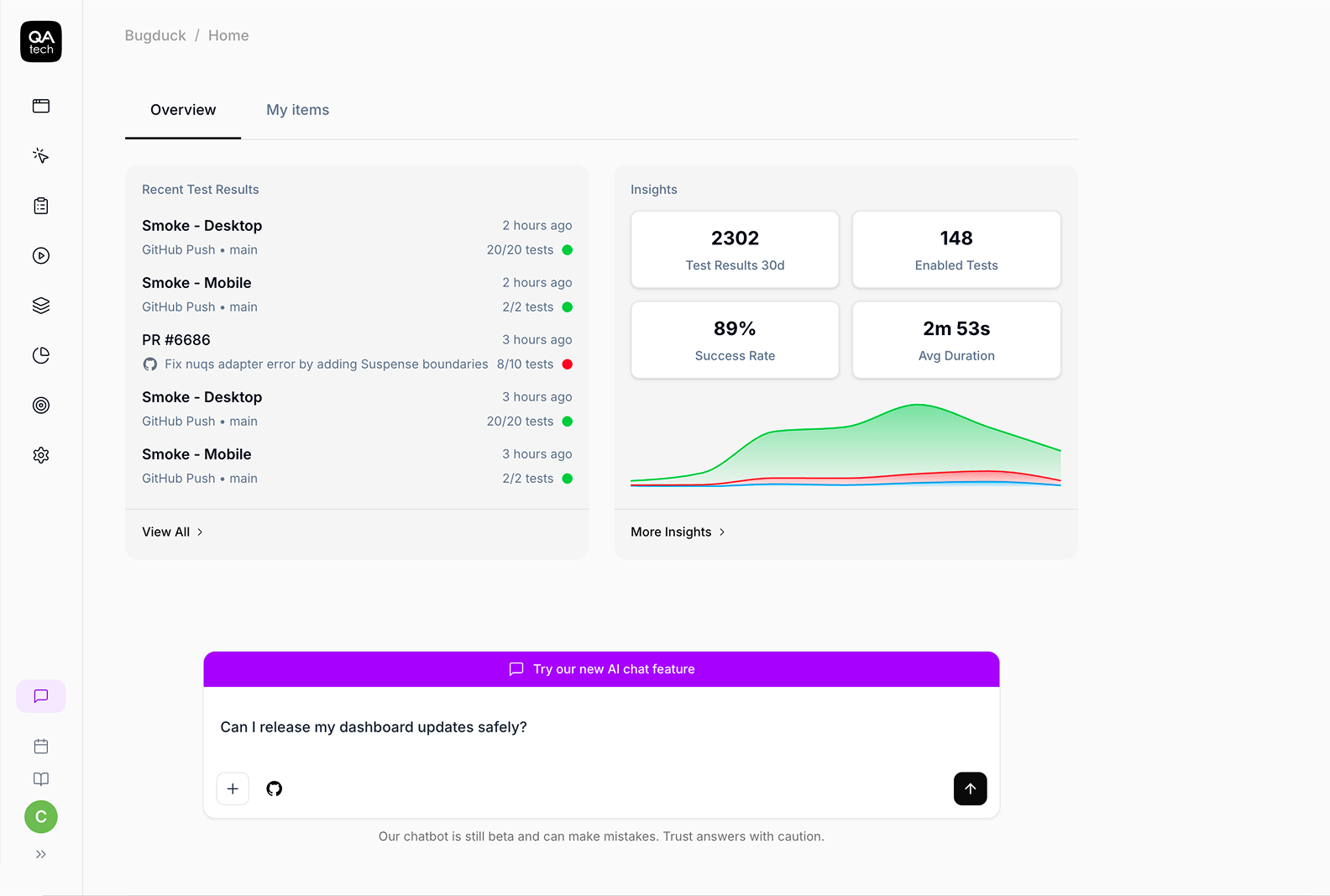

The industry is moving fast due to AI, and that’s where tools like QA.tech come into play. There are plenty of automation tools that allow you to execute test scripts, run tests, and more. But QA.tech takes a different approach. It observes real journeys and builds a structural understanding of what each interaction accomplishes.

Here’s how it works in practice: the tool analyzes user behavior patterns in your app. For example, it understands how a user goes from landing on a home page to searching for a specific product, adding it to the cart, and proceeding to checkout.

It recognizes that “add to cart” is an action that might happen through different buttons or workflows, depending on the context.

When the UI is updated (for instance, when the button is moved from a header to a sticky footer), the tool finds new paths to the same goal. In this example, the intent behind "proceed to payment" will remain the same, so the tool can adapt to the new structure of the UI and complete the validation process.

In QA.tech, you can describe what you want to test in natural language, and the tools will figure out the rest to validate.

This isn’t some magic or some black-box AI operating in mysterious ways. It’s the tool’s ability to observe real user journeys and use those observations to generate tests that focus on outcomes.

Why This Changes Everything

The benefits of this approach are concrete and immediate:

- Minimal maintenance: For simpler products, maintenance can drop to near-zero with intent-based testing. Even in more complex cases, tests don’t break when a UI engineer ships a UI refresh or when you migrate to a new component library.

- Increased team velocity: When you stop being test maintainers and start being quality advocates, you begin shipping features along with developers without the fear of breaking the test suite.

- Lower costs: Once your tests stop breaking or your test suite grows without causing failures, it’s a clear sign that your operational costs are decreasing.

- Ease-of-scale: Intent-based testing breaks the linear growth of traditional testing, where more tests mean more people to maintain them. This allows you to scale the product without scaling the team.

Here’s a real-world scenario for you: you run an A/B test comparing two different checkout flows. In selector-based tests, you would have to create two separate test suites. However, intent-based testing changes the game. It does not care about the specific UI paths for your two different checkout flows. What matters is whether the purchase has been completed successfully.

In another scenario, you’re migrating your design system from Chakra UI to Material UI. In a selector-based approach, this could mean weeks of rewrites. Every chakra-button selector breaks, data-testids need updates, and your component references have to be fixed everywhere.

When it comes to intent-based tests, they don’t care whether the action occurs through a chakra or an MUI component. It only verifies that the user is still able to add something to the cart and check out. Your UI migrates, and the tests keep passing.

And that’s exactly what QA.tech has been designed for. It adapts to tests automatically and updates its understanding of how the same user goals can be accomplished in the new UI refresh.

What You Still Control

An intent-based approach doesn’t mean a one-time setup, nor does it remove the need for human intelligence. You still decide what matters.

Think of it as a force multiplier. Tools like QA.tech handle the repetitive and foundational work; that is, ”the grunt work” of clicking through basic user flows. These tools handle a sizable amount of your workload, while you retain full control over your testing direction/strategy.

However, some test cases will still need to be written manually, including edge cases that are unique to your business, complex multi-step procedures with specialized logic, and custom validations require you to have a solid understanding of your product. Intent-based test cases do not eliminate human intelligence but serve as an enhancement.

QA.tech lowers your baseline workload by producing foundational test cases that ensure users can access your application, navigate it, and perform core functionalities.

Most importantly, it allows your team to focus on what truly matters (complex business rules, unique edge-case scenarios, and development of new features) instead of maintaining existing test case scripts.

You remain the product expert, and QA.tech simply gives you a way to scale your expertise across the testing process.

Wrapping It Up

Selector-based testing isn’t necessarily a bad idea. It just belongs to a different era. When interfaces were simple and rarely changed, selectors were stable enough to rely on. Today, however, UI layers move fast.

That’s why I believe our testing approach needs to evolve. Based on what I’ve seen in real-life projects, there are three shifts worth making.

First, stop testing implementation details. Your users do not care about the CSS classes or the DOM structure. They care about outcomes, and your tests should reflect this. Second, think in terms of intent: describe what the user is trying to accomplish, not how they’ve accomplished it. Third, use modern tools to handle the mechanics. Tools that understand intent will adapt as the product develops, providing consistency without maintenance.

To learn more about how intent-based testing works in your app, book a demo or start testing with QA.tech today.