Last week, I had a phone call with a CTO who was panicking because his company had just gone through a reorganization. He had to downsize his team of eight QA engineers to two due to budget cuts. Not only was he sad to see six of his team members go, but he was also worried about maintaining the level of product quality without these engineers.

AI has brought many changes to the process of SDLC. Teams are now moving faster and catching more bugs than ever. The old way of restructuring QA, where you have manual testers sitting in one corner, automation engineers in a different location, and a QA lead trying to get everyone to work on the same goal, is starting to feel seriously outdated. I, for one, am glad to see it go.

So, what does restructuring your QA team in 2026 actually look like? Let’s find out, based on the trends we are seeing at engineering organizations today.

The Old Org Chart

Most QA team organizations still follow the classic structure: a QA manager, a lead, an architect, plus a bunch of manual testers waiting for direction. Everyone is organized around execution: who writes tests, who runs them, who triages the results. Meanwhile, the lead scrambles to prioritize what actually needs testing.

I mean, don’t get me wrong, this worked well when you used to ship monthly. You had time to execute complete regression test suites over the weekend or to argue about whether to update test case #387 or not.

However, you’re now delivering to customers daily (in many cases, multiple times per day). The previous structure just doesn’t scale. I’ve observed multiple teams trying to push their limits using this existing framework, and they continually run into the same major issue: the test suite breaks down. Manual testing becomes the bottleneck, and eventually someone has got to ask why QA is delaying the release.

But you and I both know that they are not the problem. It’s just that the dev workflow has evolved and the QA workflow hasn’t.

Why It Doesn’t Work Anymore

The average developer using modern AI coding tools like Cursor or Copilot is now merging 4.1 pull requests each week. This represents a productivity increase of 46% over the last three months, as per DX’s AI tooling benchmark report. However, your QA team’s capacity stayed flat during that same quarter. It’s quite a gap, but it’s nothing that an AI QA team structure can’t close.

Many team leaders are like, “We’ll just add more QA resources.” But if you do the math, you will see that, as developer productivity continues to increase (and every indicator is pointing to it), you’ll need to continually double (2x) your QA resource headcount just to keep pace. No CFO would approve of that, and even if they did, you would ultimately end up with an increased number of people doing the same inefficient work.

The teams that have been able to successfully overcome this challenge do not necessarily have the largest budgets for QA. They are simply the ones who have recognized that the testing issue is not just about the headcount. It’s got more to do with the structure.

New QA Roles for 2026

Those teams that approach restructuring as a roles problem rather than a headcount problem are the ones that are actually keeping up. Let’s take a look at some new and important QA roles below.

Quality Strategist

This is one of the key roles in modern QA teams. It has grown out of the traditional QA lead role. However, the focus has changed and now, instead of managing who runs which tests, this person decides what actually needs to be tested and why it matters in the first place.

They focus on setting priorities, reviewing overall testing and the performance of AI agents, and identifying where human attention is really needed.

Test Systems Engineer

The test system engineer now maintains the infrastructures: CI/CD integration, agent configurations, test environments, and monitoring. When a test fails, they are supposed to figure out if this happened due to a valid defect or a flaky environment. They provide the technical framework to support the operation of the entire system.

Most of the current automation engineers fall into this role since they already understand the technical components.

Domain QA Specialist

People generally underestimate domain QA specialists, but here’s the thing: AI can’t replicate deep product knowledge as it’s context-dependent. This means that, without context, it can’t understand why that edge case matters to a specific customer segment.

That’s where a domain QA specialist comes in. They know edge cases better than any of your team members and their product expertise cannot be replaced. Plus, they can spot before a UI breaks in a weird user flow, and they understand your users well enough to test behaviors that agents tend to miss.

Nowadays, their job is to write test intents, which are the high-level ideas that AI agents execute.

Transition Paths for Existing Team Members

New roles get announced, but people are left on their own to figure out what it means for them.

And that’s where most restructures get it wrong. It’s important to clearly explain how each person can move into these roles and what that transition actually looks like.

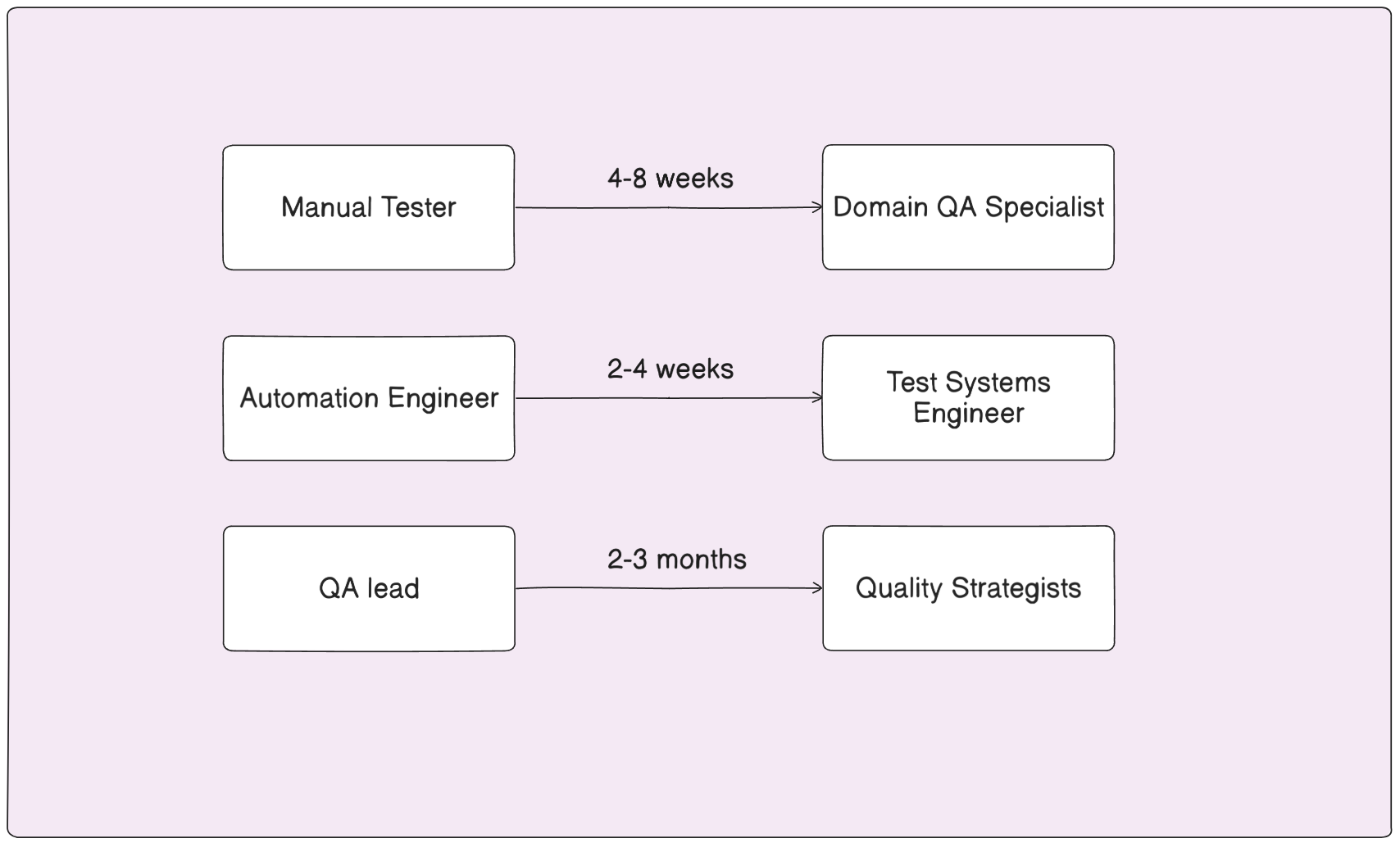

Manual Testers → Domain Specialists

Your manual testers are your product’s knowledge specialists, but most leaders probably don’t frame it that way.

They’re your assets, and you don’t want them to spend their whole day clicking through the same test cases. Instead, they need to learn how to work with agents: how to write the test intent, how to fulfill the test goal and evaluate the output, how to do exploratory testing (not exploratory like re-running test scripts), and similar.

This transformation usually takes 4-8 weeks of hands-on work to feel natural.

Automation Engineers → Test System Engineers

This is the most straightforward transition.

The majority of automation engineers already possess the CI/CD knowledge and understand the causes of test variability. They have years of experience working on infrastructure issues. You just need to provide them with ownership of the test platform itself.

They’ll need to learn the AI agent configuration side, and it usually takes weeks of focused work on the infra layer.

QA Lead → Quality Strategist

The job description doesn’t look much different on paper. Coverage and risk are still their area of expertise, but what they actually do day to day changes completely.

Instead of managing people and tracking test runs, they’re now making judgment calls about what's actually worth testing. Some QA leads take to this immediately, whereas others need a few weeks to find their footing because the feedback loops they were used to are just gone.

Based on all this, perhaps you should give it two to three months before you decide whether someone has settled into this version of the role.

What to Tell Your Team

Nowadays, when team members hear the word “AI”, they assume their job is gone. That’s a reasonable assumption, given how restructuring usually goes.

But that’s not what’s happening here.

Their job isn’t going anywhere, we’re just automating the tedious aspect of it.

A good idea is to frame it as career growth because, honestly, that’s exactly what it is. You are asking people to go from test executors to quality owners. In my book, this is a promotion in responsibility for them, even if their current title remains unchanged.

I’ve seen leaders who announced a restructure and then spent weeks “working out the details.” The worst approach is telling someone they’re losing their role under the old model without explaining how they fit into the new one. Have the individual transition paths developed before you notify the team, and show each person exactly where they fit in. Trust me, most people will be relieved after working on the same regression suite every week for their entire career.

Wrap-up

I know what you’re thinking, and it’s worth saying outright: restructuring doesn’t mean reducing headcount. I mean, sure, Pricer went from 8 QA engineers to 2 and increased coverage, but that was a broader company restructure, not the goal of the exercise.

When you are in the process of reorganizing your QA department, what you're really after is a team where every individual is actively involved in performing a task that requires their judgment level. You don’t want someone who simply runs regression suites for the fifth consecutive sprint or maintains brittle test scripts that fail when someone changes the color of a button. You want them to perform actual work that requires the use of human reasoning.

An effective QA team will undoubtedly be much more productive and, as such, will also be more motivated. If you’d like to learn more about how Pricer made changes to their QA team, check out the full case study. It’s the cleanest example I’ve seen of a team making this work without losing their sanity.

Book a demo with QA.tech and find out how AI agents fit into your model.