Companies are trying to pump 10x the code using AI without improving testing infrastructure. I mean, sure, your PRs doubled, you pushed features, and management reports AI adoption to the board…. But the test strategy you built was not meant for this speed.

If you’re a CTO or a QA lead, you’re likely seeing the “velocity gap” firsthand. Read on to learn why traditional testing breaks down as the codebase starts to move at AI’s pace. You will also find some ideas that will help you keep up.

When More Code Isn’t Just More Work

The same story repeats across different companies:The app was deployed to staging, and things looked good. This morning, you had 3 regressions on the staging environment. You find that your CI/CD pipeline breaks more often, and your QA team tries to keep the tests green rather than testing the new features of your product.

Faros report showed that development teams with AI adoption are seeing 47% more PRs per day. That sounds great until you consider what it does to your test suite. More code naturally expands the number of states your product can enter, and that pressure is easy to underestimate.

Our thought process used to be linear. More features meant proportionally more test effort: write the feature, write the tests, and move on. However, when you ship features at 3x or 5x speed, you aren’t just increasing the work for the compiler. You’re also exponentially increasing the number of possible states your app can enter.

But here’s the thing, you can’t grow your QA headcount by 300% or ask your engineers to spend 60% of their time writing tests rather than the normal 30%. The gap between what needs testing and what gets tested is widening very quickly, and things are getting complex. AI writes comprehensive code that can handle multiple different scenarios even when you only asked for one or two. That may seem advantageous until you realize that all six of its scenarios require test coverage, and half of them are edge cases you never considered could occur.

AI Code Looks Right but Fails Differently

One thing I like about AI-generated code is that it looks clean (and the comments are just the cherry on the cake). It rarely fails with syntax errors, and it follows linting rules perfectly, even the custom ones defined within the project. Most of the time, it passes code review as well. So then, how does it fail?

The problem lies in the fact that human reviewers are snowed under PRs. This makes it harder for them to catch logic issues, missing null checks, and to test new use cases that they haven’t considered before.

AI is likely to hallucinate edge cases or ignore the business rules of the legacy system you are working with. It may offer you valid JavaScript methods that do not fit with how your database handles concurrency.

Let’s say you’re building a cart total for an invoice management system, and you have this test:

expect(calculateTotal(items)).toBe(20);

AI helps refactor the pricing logic, and now the test becomes:

computeInvoiceTotal(cart)

Same logic, right? Except you’ll find that the difference in the behavior of computeInvoiceTotal when handling discount codes is that it computes tax before any discount rather than after and rounds to a different value than you would. Your test still passes since you're checking the wrong thing, but finish customers are being charged an extra 4.37 euros in taxes.

This wouldn’t be caught by code reviewers because the implementation looks correct in isolation. During the code review, they see properly structured code and all tests passing. As a result, they approve the PR. The issue only becomes apparent once the support ticket volume starts to grow.

While AI is capable of generating reasonable code based on existing patterns, it doesn't have any context about your business rules regarding, say, holiday promotional pricing and tax-exempt user types. Therefore, it cannot account for either of those scenarios. And your existing tests do not find these gaps since they were designed to validate the “happy path” of the business process and were never tested for assumptions.

Why Scripted Tests Start Falling Apart Faster

This brings us to the core problem, which is test brittleness at scale. Many test suites are fragile by nature, and constant product changes only make this worse. A small UI change, like a relocated button or different CSS class, can suddenly cause a dozen tests to fail or behave unpredictably.

Developers have come up with consistent coding practices. We reuse naming conventions, maintain guidelines, and try to keep components structured in predictable ways. But AI doesn’t recognize these similarities unless those patterns are enforced strictly. Over time, tests that rely too heavily on specific selectors or structures become unstable and harder to maintain.

If your QA team is spending more time fixing flaky tests that break because of minor Tailwind class changes in selectors, you’re not really doing quality assurance anymore, you’re just constantly patching cracks.

The business impact is significant:

- Loss of trust: When developers see failing builds constantly, they start to ignore them because failures have become normalized.

- Bypassing assertions: Since engineers are under pressure to ship quickly, they will start to comment out assertions just to get a green light.

- Remaining stuck on deployment: The fear of breaking the typically reliable “house of cards” test suites makes teams hesitant to refactor, even though AI has the potential to make the whole process easier and safer.

At the same time, the leadership doesn’t even realize this is happening. They see the green points and checkmarks and just assume everything is fine in terms of quality.

How Teams Should Adjust Their Testing Strategy (and How QA.tech Does It)

The best way to fix all this is to move from automation to autonomy.

Your test system needs to be as smart as the tools generating the code. If your code is being improved using AI, your tests will need to go through the same process, as well. Here’s an example: instead of verifying that the function calculateTotal is called with valid input, you would want to verify that a user was able to complete the checkout process and was charged properly. In addition, rather than checking whether the button has the ID submit-btn, you should make sure that the entire process of submitting a form works as it should.

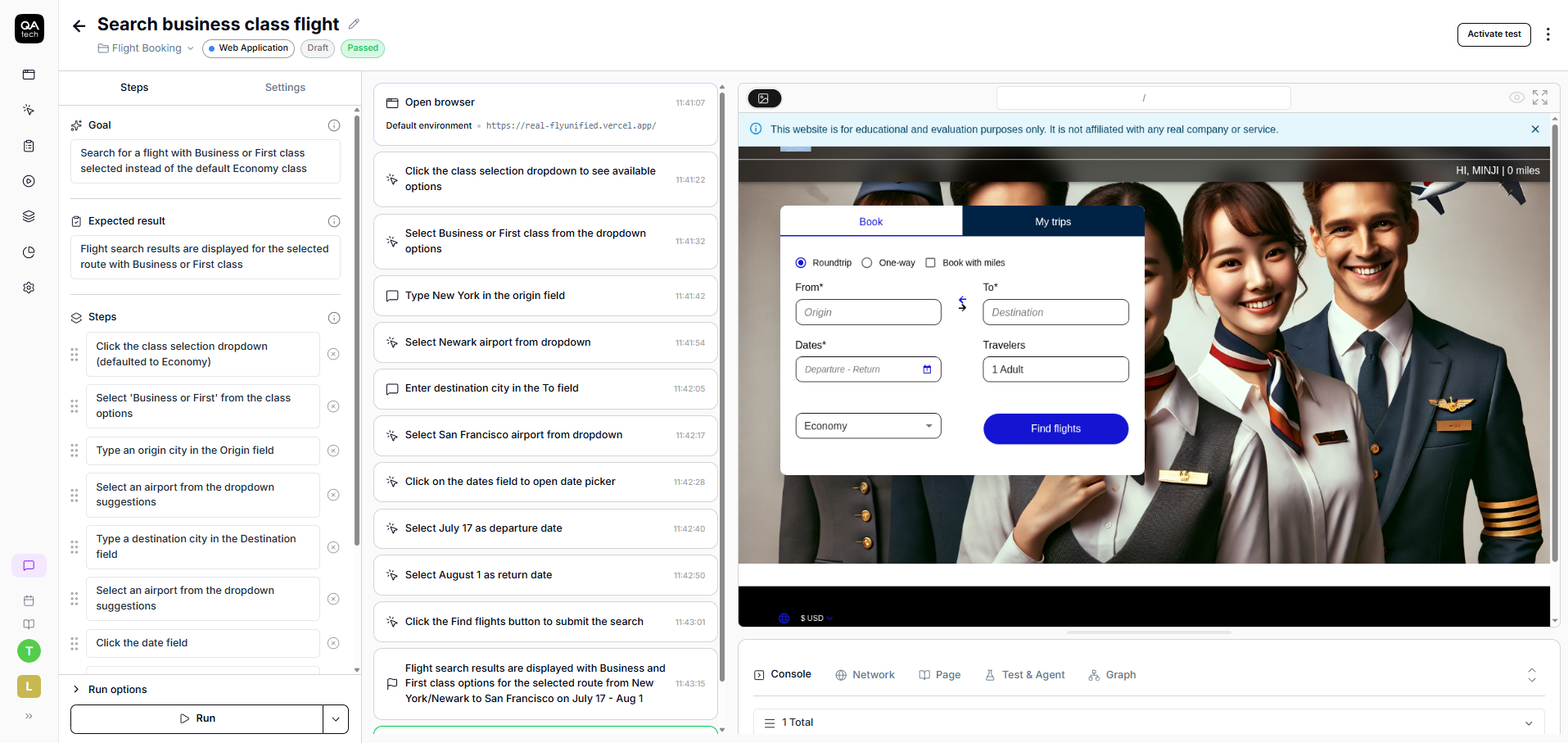

And that’s where QA.tech comes in. It does not generate scripts that say “click on this specific element and check the specific DOM node.” It mimics how real users interact with your application and builds a structural understanding of what matters.

Here’s what that looks like in practice:

QA.tech gets triggered with each new PR and catches issues like console logs, network errors, or reproduction steps.

For example, when your button ID changes from submit-btn to primary-action, a scripted test breaks immediately. QA.tech sees that the same user journey still works. The form gets filled, submission succeeds, confirmation appears, and everything adapts without manual intervention.

That’s the difference between self-healing and self-breaking tests.

Self-breaking tests are closely related to implementation. They know about specific CSS selectors, function names, and DOM structure. Change any of those, and the test fails even though the actual user behavior hasn't changed.

On the other hand, self-healing tests focus on behavior and intent. They understand that a checkout flow involves adding items, entering payment info, and completing a purchase. When the implementation details change, the test recognizes that the behavior still exists and continues working. This means different button classes, refactored functions, restructured components are no longer going to break your test suite.

Moving Forward

The days of slow manual coding are long gone. AI-driven boom is accelerating, and things are only going to get faster. If you’re still approaching your testing process the old way in 2026, you risk being left behind.

Your idea of “successful testing” needs to evolve to keep pace with this new era. Here’s what you can do:

- Stop attempting to get 100% coverage on your code with manual scripts. You can’t win this battle. Focus instead on the critical user flows that drive your product and business, following the 20/80 rule where 20% of flows generate 80% of value.

- Test to understand high-level user intent. If users can't complete their processes, no amount of other testing will make a difference.

- Use autonomous testing tools to allow AI to perform the “dumb” work of maintaining E2E tests. Let engineers concentrate on the architecture that can grow your business.

Good testing is all about building real confidence. Start with the user flows that matter most, make them resilient to UI changes, and grow your coverage based on real issues you encounter in production.

Want to see how behavior-based testing handles AI velocity? Schedule a call with QA.tech today, and see what it’s like when UI changes don’t break your test suite.