In the title, I said “hidden tax,” yes, but you're here because you've noticed it. You've seen your team spend hours every week on maintenance. Every push, refactor, or release means several tests need to be updated. And way too many times, you've had to choose between shipping blind to release a feature on time or waiting for testing to be complete and releasing late.

At the beginning of a project, test maintenance is a non-issue. Since the app is still young, the focus is on creating new scripts. But as it matures and users sign up, testing expands. Often, a suite ends up with thousands of tests that become unusable 8 months down the line. The team then has to leave it as is or rebuild. All of it requires maintenance work.

So, let’s crunch some numbers. How much time do you think your team spends maintaining tests? How much money do you think that is? Double it, maybe even triple it, and you'll still be aiming too low.

Luckily, you've come to the right place. In this article, I'll show you how much it costs your team to maintain your Selenium or Playwright suites, and why it won’t get better, seeing that the system itself is flawed. Then I'll explain how a simple shift in perspective eliminates endless maintenance and saves more than $250,000 per year for a small team of 4 devs or 3 QA engineers.

The cherry on top is that you don't need to put down your entire existing system today. You can start small, by implementing agentic testing with QA.tech.

Ready? Let's begin.

What Is the Hidden Cost of Maintaining Test Suites?

The hidden cost of test maintenance is the unplanned effort required to keep a test suite running and the cases passing. Think of all the hours spent updating scripts because a dev pushed a refactor, the time spent fixing flaky tests, or the resources consumed by unusable suites.

Still, even though it is not in budgets or reports, a heavy maintenance burden shows up in other ways. First, sprint velocity slows because devs aren't testing fast enough. They have to update scripts and write new ones before they can fully test a feature. Second, as suites grow, you notice devs are not writing new tests. Instead, most of the QA work is maintaining existing cases.

Even more commonly, large Playwright suites and other legacy setups suffer from flakiness. And the more flaky tests there are, the more red builds you get, not because of code bugs, but because of bugs in tests. This leads to many false positives, and consequently, low confidence in testing. Yet the QA team is as busy as ever, even though they are getting less done and their testing cycles are getting longer.

If you’re experiencing any of those symptoms, you’ve got high maintenance costs, which are generally felt across three areas:

- Labor costs: Engineers spend more time testing a feature than before, and the QA team takes longer to deliver the same amount of work.

- Infrastructure costs: You end up paying to maintain servers and environments that run for longer than they should and for tests that nobody trusts.

- Product costs: When a test suite requires many hours to maintain, test coverage suffers, leaving gaps through which bugs slip to live users.

Enough with the words, though. Time to do the math.

Real Numbers: Average Yearly Spend on Test Maintenance

Take, for instance, a team of 5 full-time engineers, paid an average of $85 per hour, with each dev spending 15% of their time maintaining tests. In a 40-hour work week, that time is:

6 hours * 5 = 30 hoursPut together, it is almost full-time work for one engineer. Weekly costs would be:

$85 * 30 hours = $2,550Here’s what it amounts to annually:

$2,550 * 48 weeks = $122,400

30 hours * 48 weeks = 1,440 hoursIf you’re running a QA team of 3 full-time engineers, test maintenance time skyrockets. Many spend 60% to 70% of their work week fixing broken tests. If they get paid $50 an hour, it becomes at least:

24 hours a week * $50 * 48 weeks = $57,600 per yearMultiply it by 3 engineers, and it’s $172,800.

As for other costs, if your team deploys 20 times a week and devs spend an hour each time figuring out why tests are failing, that is 20 hours spent fixing failures. Multiply that once more by $85 per hour and 48 workweeks in a year, and the total comes to $81,600.

Now let’s add those up.

$122,400 + $81,600 = $204,000

And that’s for test maintenance only. For a QA team, that would be:

$172,800 + $81,600 = $254,400

Imagine, then, what would happen if you had more engineers, a larger test suite, and more deployments. Also consider the time spent rerunning failed tests after fixing them, alongside cloud infrastructure costs.

Even early-stage startups have bigger tech teams than 5. If you think of the mid-size org with 20, 50, 100, or more engineers in dev and QA roles, the costs easily go past the $2M/year mark.

Why Does the Tax Exist?

Tightly-coupled selectors are flawed. Some engineers argue that the solution lies in improved testing strategies: use better selectors, abstract them so you only fix a selector once rather than in multiple tests, and follow best practices.

It sounds pretty reasonable until you realize that even with the best strategies, the problem doesn't go away. Take, for instance, this Playwright engineer on Reddit who complained that test maintenance takes 70% of their time.

The issue here goes beyond best practices. In fact, the system itself is the problem:

- UI coupling: Dependence on DOM structure and tightly-coupled CSS selectors lead to brittle tests that fail often.

- State coupling: Tests fail due to assumed DB states. They depend on specific values already existing in a shared database. Plus, there’s the problem of stale data.

- Environment drifts: Differences in development, staging, and production environments cause inconsistencies.

All these factors lead to non-determinism, which causes flakiness. In fact, some teams even report that up to 40% of their test suite is flaky.

Remove Maintenance with Agentic Testing

You cannot solve the QA problem by throwing more bodies or time at it. What you need is a shift in perspective. Instead of testing implementation and DOM structure, what if you focused on user flows?

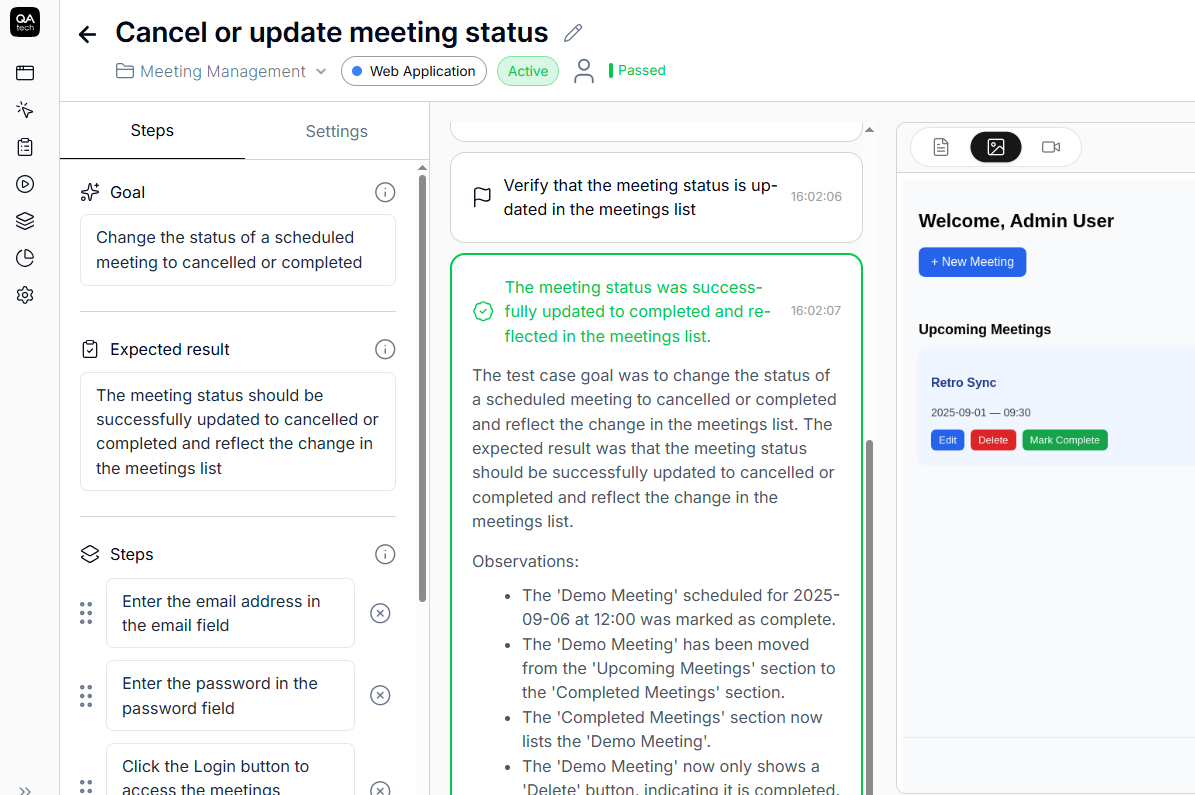

Agentic testing with QA.tech involves interacting with an app just like a real user would. Agents test against goals such as onboarding, filling out forms, and completing checkout. They extract user flows from your UI and group them into test cases.

Unlike with legacy automated testing, agents don't care about specific test steps or implementation details. Their only focus is the user’s goal. Therefore, when a dev refactors checkout, tests don't break as long as the agent can still complete a purchase.

This method is better for several reasons. First, flaky tests are all but gone, and when a test fails, you know there's an actual problem. Second, test steps auto-adapt to UI changes, so when you refactor or change the user flow, you don't need to update any test cases. Third, you can have as many test cases as you want without worrying about maintenance debt.

What Happens When the Hidden Maintenance Tax Is Gone?

When you shift from selector-based testing to goal-based agentic testing, you minimize test maintenance and eliminate a lot of the problems that come with it.

- Minimal maintenance: Agents self-adapt after UI changes. With each refactor, test steps are modified, while the goals remain the same.

- Zero flaky tests: Agents use the app like real users and complete flows would. When a test fails, you know there's an actual bug.

- Framework-agnostic test suite: Migrate your codebase to a different framework without rewriting your entire suite.

- High testing confidence: False positives are almost eliminated, and devs can refactor without fear.

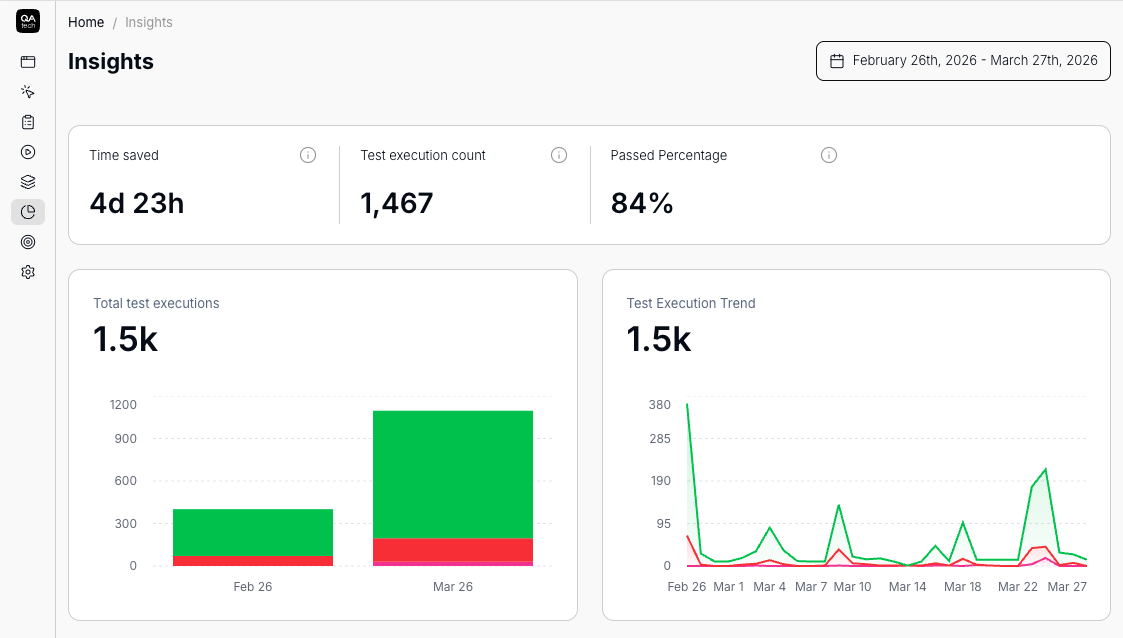

- Automated reports: QA.tech screen-records every test. Metrics, pass rates, and bugs are easy to track.

- Freed time: You’ll get to free up hundreds of hours for more productive tasks.

Case Studies: Impact of Agentic Testing with QA.Tech

Teams like AstraZeneca, Nordea, Virtusize, Any.com, and Pricer have managed to cut thousands of QA hours each month, reduce spend, and ship faster using QA.tech. They report QA gains of up to 529% ROI. Plus, they’ve achieved payback after only 3 months of onboarding.

Pricer

Pricer is a tool that powers 28,000 stores across more than 70 countries. It provides Electronic Shelf Labels (ESLs) and helps stores implement real-time price updates. As you can see, QA is of great importance here, because bugs often mean errors that impact business revenue.

Pricer's team used to struggle with a slow testing process and blocked releases. Their E2E suite had grown out of hand, and there were production bugs. However, after they adopted QA.tech, less time was spent on test setups, test coverage increased, and QA pipelines and release cycles sped up. According to Chris Chalkitis, Chief Digital Officer at Pricer:

"Pricer saves 390 hours of testing each quarter."

Airpelago

A much smaller company with only about 30 employees, Airpelago saw major progress with QA.tech. Before, they had a Playwright setup. The E2E suite was near unusable due to its flakiness. According to Tobias Fridén, the CTO and co-founder:

"Testing the map-based interfaces was almost impossible. Every interaction was flaky."

Once they got started with agentic testing, Airpelago first defined tests for the main user journeys and went from there. Today, QA.tech runs its E2E tests and integrates with GitHub, where commits trigger tests. Fridén adds:

"Before QA.tech, we tiptoed when releasing. Now, we move with confidence. That’s a huge shift for a small but ambitious team like ours."

Marcus Engvall (Developer Airpelago) chimes in, as well:

"Since QA.tech flipped the entire QA process on it's head, a lot has changed. We no longer require nearly as much manual QA-testing as we did before, which in turn allows us to test more of our app."

Migrate to QA.Tech

Agentic testing with QA.tech uses semantic testing to extract user flows and edge cases. You also get a report for every test run and auto-notifications via your preferred channel.

Getting started is quite easy. Simply sign up, get an API token, and integrate your project's repository. Then you can select test environments and devices, trigger test runs after deployments, and automate PR reviews.

The best part is that you don't have to upend your entire existing test strategy and suites today. Agentic testing layers nicely on top of your current workflows. You can start by implementing it for flaky E2E tests only and go from there.

Ready to migrate to QA.tech? Why don’t you get a demo first?